Action adaptation

Prolonged exposure to a visual stimulus typically results in a visual “aftereffect” where subsequent perception is biases for a short period afterwards. A good example of this phenomenon is the “waterfall illusion”; here, after watching the downwards movement of a waterfall for about 1 minute, will result in an illusionary upwards movement of the nearby stationary rock upon fixation. Visual adaptation to simple visual stimuli and resulting aftereffects have been well studied using psychophysics and neuroimaging techniques in humans as well as single unit recording in non-human primates. The link between psychophysical phenomena and underlying neurophysiological mechanisms is relatively well understood for these simple stimuli. As such, psychophysical adaptation paradigms have proved very powerful in accessing the brain mechanisms underlying the perception of the adapted stimuli.

Recently our lab has demonstrated that we adapt to the actions of other individuals. This adaptation is occurring at a high-level in the visual system where the actions themselves are coded. The results we find using psychophysical action adaptation paradigms in human observers show striking parallels with results obtained using single unit recording techniques in the temporal lobe of non-human primates. These results demonstrate that our psychophysical techniques can be used to access action coding mechanisms in the human brain allowing us to study them whilst potentially bypassing expensive neuroimaging techniques.

Face adaptation

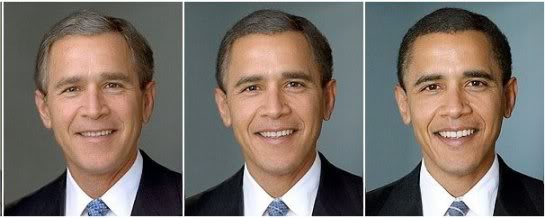

Below is an example of face identity adaptation. Stare for about 1 minute at the left hand image of Bush, then move your gaze to the middle image. You should experience a vivid perception of Obama. This effect work in the opposite fashion if you instead stare at the image of Obama. The middle image is a morph, half way between the images of Bush and Obama.

Adaptation to hand actions

Adaptation to grasping and placing actions shows that these hand actions are coded together, rely on the presence of object in the hand, and neural mechanims are broadly sensitive to the view from which hand actions are seen.

We can film hand actions from multiple viewpoints simultaneously (at 50Hz) in order to obtain stimuli for testing viewpoint sensitivity and the underlying view-tuning functions of neural mechanims coding goal-directed hand actions

Adaptation to whole body actions

Adaptation to whole body actions indicate that we have separate populations of neurons in the brain that code forward walking and backward walking. Neural mechanims that respond to walking appear to be view-independent and insensitive to identity.

We are currently investigating not only how actions are recognised by the visual system, but how traits are derived from actions. We are investigating both emotional action aftereffects and trustworthiness action aftereffects.

Funding provided by